AI Research

50 articles in this category

Google DeepMind Tackles AI Evaluation Challenges

Google DeepMind's Nicholas Kang and Michael Aaron discuss the challenges in current AI evaluation and Kaggle's innovative solutions like Hackathons, Agent Exams, and Game Arena.

Omar Sanseviero on Google's AI Strategy

Omar Sanseviero from Google DeepMind discusses Google's AI strategy, focusing on efficient models, multimodality, and open innovation in AI.

Graph Neural Networks Explained: GNN Basics & Models

Explore the essentials of Graph Neural Networks (GNNs), from their basic principles to key models like GCNs, GraphSAGE, GATs, GINs, and Transformers.

DeepMind's Scale: How Agents Run at Google

Google DeepMind's KP Sawhney and Ian Ballantyne reveal how they run AI agents at scale, discussing the architecture, tools, and challenges involved in managing complex automated tasks.

Google DeepMind on Building with Gen Media Stack

Google DeepMind's Paige and Guillaume showcase building generative media pipelines with Google's Gen Media Stack.

MOSS: Source-Level Self-Rewriting for Agents

MOSS enables AI agents to self-rewrite their source code, achieving significant performance gains and overcoming limitations of text-based evolution.

DeltaBox: Millisecond C/R for AI Agents

DeltaBox revolutionizes AI agent performance by introducing millisecond-level checkpoint/rollback via OS-level change-based state management.

MARL: The Scaffolding for Real-World AI

Multi-agent reinforcement learning in drone racing surpasses human pilots and drastically cuts collisions, paving the way for safer real-world AI co-existence.

Google DeepMind Taps AI for Asia-Pacific Climate Crisis

Google DeepMind launches an 'AI for the Planet' accelerator in Asia Pacific to help organizations tackle environmental risks with advanced AI.

Attractors Unlock Scalable Reasoning

Equilibrium Reasoners (EqR) leverage learned attractor landscapes to achieve scalable, adaptive test-time compute allocation, dramatically boosting accuracy on complex reasoning tasks.

Jure Leskovec on Relational Foundation Models

Jure Leskovec, AI researcher and Stanford professor, discusses Relational Foundation Models, a new AI approach for understanding complex enterprise data and its applications.

DeepWeb-Bench: Beyond Frontier LLM Claims

DeepWeb-Bench benchmark exposes derivation and calibration as major LLM failure points, revealing domain specialization and the inadequacy of current evaluations.

Agent JIT Compilation for Web Automation

Agent just-in-time compilation revolutionizes web automation by compiling tasks into efficient code, yielding significant speed and accuracy gains.

Microsoft's small AI agents get smarter

Microsoft Research unveils MagenticLite, an AI system using smaller models for efficient browser and file system tasks, pushing agentic AI capabilities on user hardware.

OpenAI and Chip Ganassi Racing Tease Future Collaboration

OpenAI and Chip Ganassi Racing are joining forces for a research and development initiative, as teased in their new video 'R&D: Part 1'.

Chatbots Fail News Accuracy, Forum AI Study Reveals

A Forum AI study reveals major chatbots struggle with news accuracy, showing high failure rates on election-related prompts and reliance on biased sources.

Architecting LLM Agents: The SDB Primitive

Architecting reliable production LLM agents hinges on the Stochastic-Deterministic Boundary (SDB) and a catalog of runtime patterns.

AI Solves Erdős Breakthrough: OpenAI Researchers Detail Breakthrough

OpenAI researchers reveal how AI has solved the complex Erdős Unit Distance Problem, a breakthrough with implications for mathematics and science.

Foundation Models Unlock Time Series Scaling

Toto 2.0 foundation models demonstrate remarkable scaling, achieving state-of-the-art forecasting performance across multiple benchmarks with a unified training approach.

GeoX: Self-Play for Geospatial Reasoning AI

GeoX, a novel self-play framework, achieves state-of-the-art geospatial reasoning AI performance without costly human annotations, by generating and solving problems through executable programs.

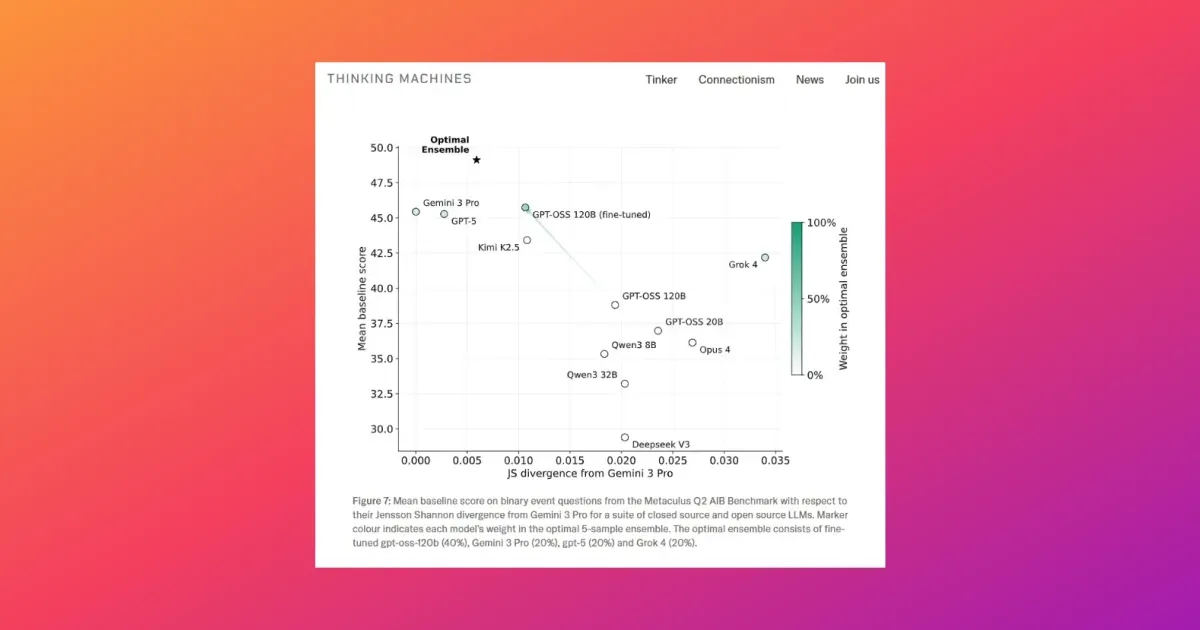

AI Models Now Predict the Future, Almost

Fine-tuning LLMs for forecasting tasks boosts their accuracy, with specialized models now rivaling top human predictors and enhancing ensemble predictions.

Google's Cormac Brick on Tiny LLMs for On-Device Agents

Google's Cormac Brick discusses the fine-tuning of Tiny LLMs for on-device agents, highlighting the benefits of LiteRT-LM and Gemma 4 for edge AI applications.

SpatioRoute VLM: Dynamic Prompting for Video QA

SpatioRoute VLM revolutionizes zero-shot spatial video question answering with dynamic prompt routing, achieving SOTA without fine-tuning or 3D sensors.

TaskGround: Bridging Scene Context and Action

TaskGround revolutionizes household AI by enabling compact models to interpret complex scenes, infer task structures, and act effectively, drastically improving performance and reducing costs.

LLM Protocols Revolutionize MARL State Recovery

LLM-driven Multi-Agent Communication (LMAC) uses LLM reasoning to create adaptive protocols, significantly improving state reconstruction and performance in MARL.

Code as the Agent Harness

Code is evolving into the foundational 'harness' for AI agents, enabling more executable, verifiable, and stateful systems across diverse applications.

Active Exploration Unlocks Spatial AI

New benchmark ESI-BENCH reveals active exploration is key to embodied spatial intelligence, exposing AI's 'action blindness' and metacognitive gaps.

Unlocking LLM Recall: Data Composition is Key

New research reveals a sigmoid scaling law for LLM factual recall, driven by model size and training data composition, explaining up to 94% of performance variance.

Google's AI Speeds Up Aging Research

Google DeepMind's Co-Scientist AI is revolutionizing cellular aging research by rapidly identifying genetic targets and analyzing experimental data, slashing research timelines.

Sam Altman Wins as Jury Sides With OpenAI Mission

A jury has dismissed Elon Musk's lawsuit against OpenAI, ruling he sued too late. This decision validates OpenAI's for-profit mission and removes a major legal obstacle.

GenMedia: DeepMind's Vernade on AI's Creative Future

Google DeepMind's Guillaume Vernade discusses the evolving potential of generative media (GenMedia) and its role in augmenting human creativity.

Anthropic Explains Long-Running AI Agents

Anthropic's Ash Prabaker and Andrew Wilson discuss building AI agents that can operate for hours without losing focus or their objectives.

Shodh-MoE: Unlocking Universal SciML

Shodh-MoE's sparse activation architecture resolves multi-physics interference in SciML, enabling universal foundation models with guaranteed physical properties.

Unified Embodied AI: Pelican-Unified 1.0

Pelican-Unified 1.0, the first unified embodied foundation model, achieves SOTA performance by integrating VLM, reasoning, and generation, proving unification enhances rather than compromises specialist strengths.

Viverra: Verifying AI-Generated Code

Viverra tackles the trust deficit in AI-generated code by automatically producing formally verified annotations, enhancing developer comprehension and productivity.

AI Delegation: Reliability Concerns Emerge

New Microsoft Research highlights how AI can degrade document fidelity in long, delegated tasks, stressing the need for better verification and orchestration.

WARDEN: Tackling Low-Resource Language AI

WARDEN pioneers a modular AI system for low-resource languages, using phoneme transfer and LLM-guided dictionaries to transcribe and translate Wardaman with minimal data.

GRIP-VLM: RL for Efficient Vision-Language Models

GRIP-VLM employs Reinforcement Learning for discrete Vision-Language Model pruning, achieving superior efficiency and adaptability.

LLMs Tame Software Requirements

VERIMED leverages LLMs and SMT solvers to formally audit natural-language software requirements, turning ambiguity into testable signals and boosting verified accuracy.

Real-Time Agentic AI Unlocked

New methods like Asynchronous I/O and Speculative Tool Calling slash latency for agentic AI, enabling real-time interactions on both cloud and edge devices.

Beyond Model Capability: The Harness for SE Agents

Autonomous software engineering agents' reliability hinges on a novel 'AI Harness' system, not just model capability, enabling verifiably correct changes.

LMPath: Semantics Supercharge UAV Search

LMPath integrates language and vision models to create semantically-aware exploration priors for UAVs, dramatically improving search mission efficiency over traditional geometric methods.

OpenAI Podcast: Image Generation's Renaissance

OpenAI researchers Kenji Hata and Adele Li discuss the 'renaissance' in AI image generation, highlighting new models, user creativity, and future possibilities.

Mind the Gap in Agent Observability

Microsoft's Amy Boyd and Nitya Narasimhan discuss the critical 'gap' in AI agent observability and the need for better tools.

Agentic AI Fails: Loops, Planning & Unsafe Tool Use

An IBM Advisory AI Engineer breaks down why agentic AI systems fail, focusing on infinite loops, planning errors, and unsafe tool use, and offers mitigation strategies.

MoE LLMs Confront Real-World Hardware Noise

Hardware noise in CIM systems degrades MoE LLM performance. ROMER, a new calibration framework, significantly improves accuracy by restoring load balance and stabilizing routing.

Auditing LLM Agent Skill Integrity

A new framework, Behavioral Integrity Verification (BIV), reveals 80% of LLM agent skills have implementation gaps, primarily due to oversight, and achieves 0.946 F1 for malicious skill detection.

Hybrid Agents Master GUI-Tool Orchestration

ToolCUA agent overcomes hybrid action space uncertainty with a novel staged training pipeline, achieving state-of-the-art performance in GUI-Tool orchestration.

Beyond RGB: Grounding Vision-Language on Raw Sensor Data

PRISM-VL advances vision-language models by grounding them in raw camera measurements, not just RGB, significantly improving performance on challenging visual tasks.

AlphaGRPO: Reasoning-Enhanced Multimodal Generation

AlphaGRPO framework enhances multimodal generation via GRPO and DVReward, enabling reasoning and self-correction without cold-start, validated across benchmarks.